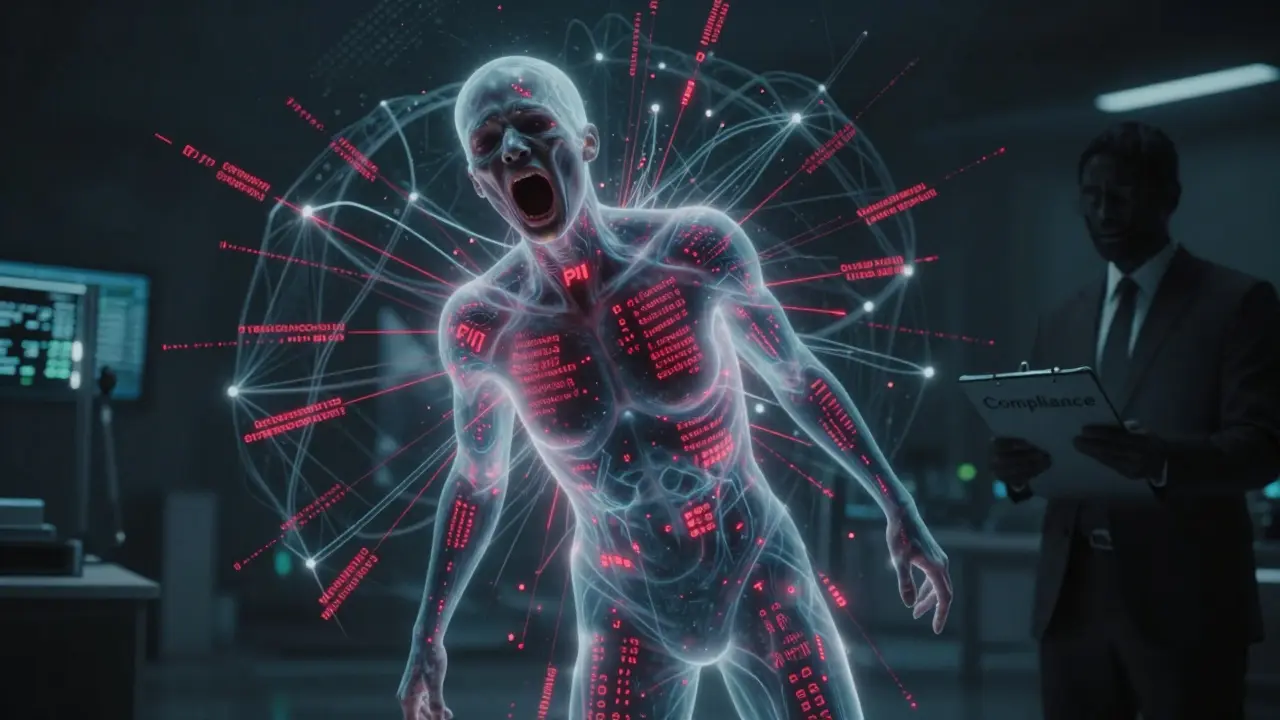

When you ask a large language model (LLM) a question, it doesn’t just pull answers from a database. It’s been trained on billions of pieces of text scraped from the internet - emails, forum posts, medical records, even private chat logs. And that’s where the problem starts. LLM data privacy isn’t about encrypting files or locking servers. It’s about stopping a machine from remembering and repeating things it wasn’t supposed to know. This isn’t science fiction. It’s happening right now.

Why Traditional Privacy Rules Don’t Work for LLMs

You’ve probably heard of GDPR or CCPA. They say: collect only what you need, store it briefly, get consent, and let people delete their data. Simple. But LLMs break all of them. Take data minimization. LLMs are trained on massive, unfiltered datasets. A model like GPT-4 didn’t just learn from curated books. It learned from public Reddit threads, leaked customer service logs, and personal blog posts. No one gave permission. No one even knew their data was being used. Then there’s the right to be forgotten. Under GDPR, you can demand your data be erased. But if your name, address, or medical history got fed into a model during training, there’s no button to delete it. The data isn’t stored in a file. It’s woven into millions of numbers inside the model’s weights. Researchers at Carnegie Mellon showed that with the right prompts, you can extract exact training examples - like a person’s full Social Security number - from models that were supposedly anonymized. And here’s the kicker: traditional anonymization tools fail. If you scrub names and emails from a dataset before training, you still leave behind patterns. A model can infer someone’s identity from their writing style, job title, or even the way they mention a local coffee shop. A 2021 study found that GPT-2 could reproduce 0.23% of its training data verbatim. That doesn’t sound like much - until you realize that’s millions of private records.The Seven Core Principles of LLM Data Privacy

Despite the chaos, there’s a framework that works. It’s not new. It’s based on the same rules that protect your medical records or bank data - just adapted for machines.- Data minimization: Only use the data you absolutely need. Don’t train on entire public web archives. Filter out personal identifiers before ingestion.

- Purpose limitation: If you train a model to answer customer service questions, don’t let it also write medical diagnoses. Keep uses narrow and documented.

- Data integrity: Garbage in, garbage out. If your training data has biased or incorrect personal info, the model will learn and repeat it.

- Storage limitation: Don’t keep raw training data longer than needed. Once the model is trained, delete the source files.

- Data security: Encrypt data at rest and in transit. But also protect the model itself - it’s now a storage system for sensitive patterns.

- Transparency: Tell users when they’re interacting with an LLM. And if their data might be used, say how.

- Consent: This is the hardest. For public data, you can’t get consent. But for proprietary or sensitive datasets (like internal corporate chats), you must.

Four Practical Controls That Actually Work

You can’t just turn off the internet and hope for the best. You need layered technical controls. Here are the four most effective ones being used today.1. Differential Privacy

Think of this as adding static to a recording. Google used it in BERT to protect user search data. It works by injecting small amounts of mathematical noise into the training process. This makes it mathematically impossible to tell whether a single data point was in the training set. The catch? It reduces accuracy. Studies show a 5-15% drop in model performance. But for some uses - like internal chatbots that handle HR questions - that trade-off is worth it. Google’s own research showed that with adaptive noise, they could reduce privacy leakage by 82% while keeping 95% of the original model’s accuracy.2. Federated Learning

Instead of sending data to a central server, federated learning brings the model to the data. Imagine a bank training a fraud-detection model on customer transactions. Instead of uploading all those transactions to the cloud, the model runs on each user’s device. Only the updated model weights - not the raw data - get sent back. This cuts down on data collection dramatically. But it’s expensive. Lee et al. (2021) found it needs 30-40% more computing power. Still, JPMorgan Chase used it internally and cut PII exposure by 85%.3. PII Detection with LLMs

Old-school tools like regex or simple keyword filters miss half the threats. They catch “John Smith” but not “J. Smith, 45, cardiologist, Boston.” Enter LLM-powered PII detection. IBM’s Adaptive PII Mitigation Framework uses a specialized model to scan text for personal identifiers. In tests, it hit an F1 score of 0.95 - meaning it correctly identified 95% of passport numbers, medical IDs, and financial details. Compare that to Amazon Comprehend (0.54) or Presidio (0.33). Even better, context-aware systems reduce false positives by 65%. They know that “4500 Main St.” is likely an address, but “4500” in a code snippet isn’t.4. Confidential Computing

This one’s hardware-based. Intel SGX and AMD SEV create encrypted “enclaves” - secure zones inside the CPU. Even if a hacker gets into the server, they can’t read what’s happening inside the enclave. Microsoft and Google now use this for high-risk LLM deployments. Data stays encrypted during training and inference. The model processes it, but no one - not even the cloud provider - can see the raw inputs or outputs. The downside? Latency increases by 15-20%. But for healthcare or financial apps, that’s a small price to pay.What Happens When You Skip These Controls?

The consequences aren’t theoretical. In 2024, a major European telecom company had an LLM that regurgitated customer service transcripts verbatim in 0.23% of queries. That’s 1 in every 430 interactions. Some included full names, addresses, and account numbers. They got fined €2.1 million under GDPR. Gartner surveyed 127 enterprises. 68% had unexpected data leaks during their first LLM rollout. Healthcare orgs were hit hardest - 79% had breaches. Why? They used models trained on public medical forums without realizing those forums contained real patient records. And it’s getting worse. The EU AI Act, which took effect in February 2025, now classifies LLMs as “high-risk” if they’re used in healthcare, banking, or public services. Non-compliance means fines up to 7% of global revenue.

Where the Industry Is Headed

The market for AI privacy tools hit $2.3 billion in 2024 and is growing 38% a year. Companies like Private AI and Lasso Security are raising millions. Microsoft’s PrivacyLens toolkit can now auto-redact PII from model outputs with 99.2% accuracy. Google’s PrivateTransformer cuts privacy leakage by 92% without hurting performance. But here’s the hard truth: no technical fix solves the core problem. LLMs are memory machines. And memory doesn’t forget. The European Data Protection Board now requires all high-risk LLMs to pass membership inference tests - meaning attackers must fail to identify if a specific person’s data was in the training set. The bar? Less than 0.5% success rate. By 2027, Forrester predicts 80% of enterprise LLMs will use privacy-preserving techniques. Right now, only 35% do.What You Should Do Now

If you’re building or using an LLM:- Map every data source. What’s in your training set? Where did it come from?

- Apply differential privacy or federated learning to sensitive data.

- Use LLM-based PII detection - not regex - to scan inputs and outputs.

- Implement confidential computing for high-risk use cases.

- Assign a privacy engineer. Not a lawyer. Not a developer. A dedicated person who understands both AI and regulation.

- Test for membership inference attacks. Don’t assume your model is safe.

Can LLMs be trained without storing personal data?

Yes, but it’s not easy. Techniques like federated learning and differential privacy allow training without centralizing raw data. Federated learning keeps data on users’ devices and only shares model updates. Differential privacy adds noise to training data so individual records can’t be identified. However, even with these methods, some personal patterns may still be learned. Complete avoidance of personal data requires strict filtering before training - which means losing useful context and potentially reducing model accuracy.

Is it possible to delete someone’s data from an LLM?

Technically, no - not reliably. Unlike a database where you can delete a row, LLMs store information as numerical weights across millions of parameters. There’s no simple “delete” button. Researchers are working on machine unlearning, which tries to remove specific data from a trained model, but it’s still experimental and requires retraining 20-30% of the model. For now, GDPR’s right to erasure is largely unenforceable for data embedded in LLMs. The only reliable method is to prevent personal data from entering training datasets in the first place.

How do LLMs leak personal data during inference?

LLMs leak personal data when users prompt them in ways that trigger memorized training examples. For example, asking “What was the email address of John Smith who worked at Acme Corp in 2021?” might cause the model to recall and repeat a real email from its training data. This happens because LLMs don’t distinguish between general knowledge and specific private facts - they’re trained to predict likely text, not to filter out sensitive content. Even harmless-seeming inputs can trigger leakage if they match patterns from private data.

What’s the difference between PII detection tools and LLM-based PII detection?

Traditional PII detection tools use rules - like looking for patterns like “XXX-XX-XXXX” for Social Security numbers. They miss context. An LLM-based system understands language. It can tell that “Dr. Elena Rivera, 58, treated at Mercy Hospital, cardiology” contains personal health information even if no numbers are present. IBM’s framework achieved 95% accuracy on complex cases like medical records, while rule-based tools like Presidio scored only 33%. LLM-based detection adapts to context, jargon, and regional formats, making it far more effective.

Are there any regulations specifically for LLM data privacy?

Yes. The EU AI Act, effective February 2025, classifies LLMs as high-risk if used in healthcare, education, or public services. It requires specific privacy safeguards, including data minimization, transparency, and testing for membership inference attacks. The U.S. FTC has also issued enforcement guidelines threatening action against companies that fail to implement “reasonable” privacy measures for LLMs. GDPR and CCPA still apply, but regulators now expect additional controls beyond traditional compliance.

Nicholas Zeitler

March 11, 2026 AT 07:37Okay, let’s be real-this isn’t just about compliance, it’s about ethics. I’ve seen teams skip differential privacy because ‘it slows things down,’ and then wonder why their chatbot spat out a customer’s full SSN in a support reply. It’s not a bug; it’s a failure of imagination. We’re building memory machines and acting like they’re calculators. You don’t train a model on raw forum logs and then shrug when it repeats someone’s medical history. That’s not AI-that’s negligence dressed up as innovation.

Differential privacy isn’t optional. Yes, it costs a few percentage points in accuracy-but what’s the cost of a lawsuit, a breach, or someone’s identity being stolen because you were too lazy to add noise? Google didn’t do it because it was easy. They did it because they knew the alternative was catastrophic. And if you’re in healthcare or finance? You’re already on borrowed time.

Confidential computing? Still underused. Why? Because it’s expensive. But let me tell you, €2.1 million in fines? That’s cheaper than a data leak. The EU AI Act isn’t a suggestion. It’s a wake-up call. And if your privacy engineer is part-time? Fire them. This needs a full-time warrior.

LLM-based PII detection? Finally. Regex can’t catch ‘J. Smith, 45, cardiologist, Boston.’ But an LLM can. IBM’s 0.95 F1 score? That’s not luck. That’s science. Stop using Presidio like it’s 2018. We’re in 2025. The tools exist. Use them.

And stop saying ‘we didn’t know.’ You had access to Carnegie Mellon’s research. You had Gartner’s report. You had the EU’s public guidelines. Ignorance isn’t a defense. It’s a liability.

Teja kumar Baliga

March 12, 2026 AT 22:10Great breakdown! I work in India with a health tech startup, and we just implemented federated learning last month. It’s been a game-changer-no more sending patient records to the cloud. We train on-device, send only weight updates. Slower? Yes. But now our users trust us more. One patient even thanked us for ‘not storing their info.’ That’s worth the extra compute.

Also, love how you mentioned consent. Even in places with weaker laws, people deserve to know. We added a simple pop-up: ‘Your chat history helps train our model. Opt out anytime.’ 80% stayed. They just wanted to feel in control.

Small step, big impact. Keep pushing for this. We’re all in this together.

k arnold

March 14, 2026 AT 03:40Wow. A 10-page essay on how to not get sued. Congrats, you just turned AI ethics into a corporate compliance bingo card. Differential privacy? Federated learning? Confidential computing? Sounds like a TED Talk written by a lawyer who hates fun.

Let me guess-you’re the guy who reads the EULA before clicking ‘I agree.’ Good for you. Meanwhile, the rest of us are trying to build something that doesn’t sound like a government white paper.

And yes, LLMs leak data. So what? So do humans. We’ve been leaking secrets for centuries. You think the internet didn’t already have every SSN, phone number, and medical condition on it? This isn’t a new problem. It’s just a new scapegoat.

Stop over-engineering. Just filter the worst stuff. Use regex. Call it good. Your model won’t remember your ex’s address. It’s not a diary. It’s a pattern recognizer. Chill.

Tiffany Ho

March 14, 2026 AT 15:17michael Melanson

March 15, 2026 AT 16:26