The core challenge is the "memory wall." LLMs are massive, and the cost of keeping those parameters in GPU VRAM is the primary driver of your cloud bill. To fix this, we need to look at cost-performance tuning as a multi-layer strategy: reducing the size of the model, optimizing how it handles data, and being smarter about which model handles which request.

Squeezing the Model: Quantization and Distillation

The fastest way to lower your bill is to change how the model is stored. Quantization is the process of reducing the precision of a model's weights from high-bit formats like FP16 to lower-bit formats like INT4 or FP8 . Think of it as compressing a high-res image into a JPEG; you lose a tiny bit of detail, but the file size plummets.

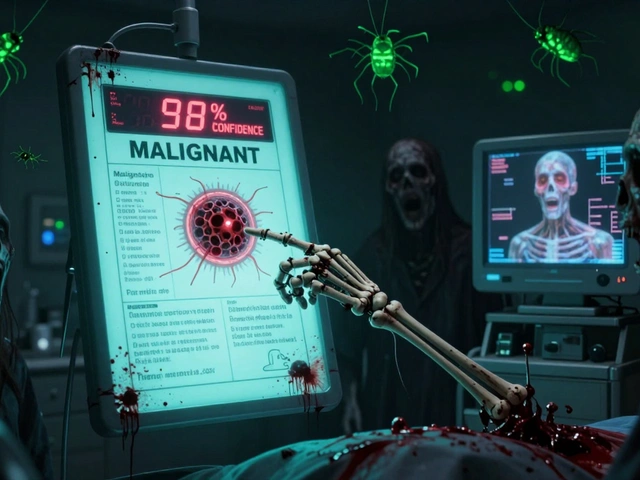

If you move to FP8, you can expect about a 2.3x speedup with a negligible 0.8% drop in accuracy. If you go further to INT4, the speedup jumps to 3.7x, though the accuracy drop hits around 1.5%. For a model like Llama-3-70B, this reduces memory requirements by up to 75%, allowing you to fit larger models on cheaper hardware. However, be careful: some users in fintech have reported that INT4 quantization can cause hallucination rates to spike-jumping from 2% to nearly 9% in strict compliance tasks. If your use case is high-stakes, stick to FP8.

When quantization isn't enough, consider Model Distillation, where a large "teacher" model trains a smaller "student" model to mimic its behavior. This can reduce the parameter count by 60-80% while retaining up to 97% of the original capability. It's a more upfront engineering investment than quantization, but as Professor Christopher Ré from Stanford notes, it's often the most overlooked way to get 1/10th of the cost.

| Technique | Typical Speedup | Memory Reduction | Accuracy Impact | Implementation Effort |

|---|---|---|---|---|

| FP8 Quantization | ~2.3x | ~50% | Minimal (0.8%) | Low (2-3 weeks) |

| INT4 Quantization | ~3.7x | ~75% | Low to Moderate (1.5%+) | Low (2-3 weeks) |

| Distillation | High | 60-80% | Variable (3-8% loss) | High (6-12 weeks) |

Turbocharging Throughput with vLLM and SGLang

Hardware isn't the only bottleneck; how you feed data to the GPU matters just as much. Traditional static batching is wasteful. Instead, vLLM introduces continuous batching, which dynamically groups requests as they arrive. This shifts GPU utilization from a mediocre 35% up to 85%. In real-world tests, this means processing 147 tokens per second compared to just 52 with old methods.

To further cut latency, implement KV Caching. This technique stores the keys and values of previous tokens so the model doesn't have to re-calculate them every time it generates a new word. In deployments of models like OpenChat, this has been shown to cut latency by 30-60%.

For those running multiple versions of a model (like different languages or specialized fine-tunes), SGLang enables multi-LoRA serving. Instead of dedicating one A100 GPU per model variant, you can run 32 to 128 variants on a single card. One enterprise case study showed this reduced hardware costs by 87%, saving a company $28,000 a month in cloud spend.

The Smart Route: Model Cascading and RAG

Why use a sledgehammer to crack a nut? Not every prompt needs a 70B parameter model. Model Cascading is a routing strategy where 90% of simple queries go to a tiny model (like Mistral-7B), and only the complex ones are escalated to a premium model. This can lead to an 87% cost reduction. The trade-off is a slight latency hit-usually 15-25ms-because the router has to decide where the prompt goes.

Similarly, RAG (Retrieval-Augmented Generation) helps you stop paying for massive context windows. Instead of stuffing a 100-page PDF into the prompt, RAG retrieves only the relevant paragraphs. This can reduce context-related token usage by 70-85%, which is critical when using models with million-token windows where costs can spiral out of control.

Avoiding the "Optimization Debt" Trap

It's tempting to crank every setting to the maximum to save money, but over-optimization has a price. This is what engineers call "optimization debt." If you push INT4 quantization too hard, you might notice that your model starts hallucinating in subtle ways that standard benchmarks don't catch, but your users certainly will.

Another pitfall is the throughput-latency trade-off. If you optimize your server for maximum throughput (processing as many requests as possible), your "tail latency" (the time the slowest 1% of users wait) can spike by 200-300%. For a fintech app where 800ms is the hard limit for a user to stay engaged, this is a dealbreaker. You have to decide if you're building for a batch-processing job where speed doesn't matter, or a real-time chat interface where it does.

If you're working with Mixture of Experts (MoE) models like Mixtral-8x7B, be aware that standard quantization is less effective. While dense models see 75% memory reduction with INT4, MoE models only see about 20-35%. These models require a more specialized approach to expert routing to be truly efficient.

Which optimization should I start with?

Start with quantization (FP8) and a high-performance inference engine like vLLM. These provide the most immediate "bang for your buck" with the lowest engineering effort (usually 2-3 weeks of work) and the least risk of degrading model quality.

Does quantization always lead to hallucinations?

Not always, but the risk increases as you lower the precision. Moving from FP16 to FP8 is generally safe. Moving to INT4 can introduce errors, especially in specialized domains like finance or medicine where precise terminology is critical. Always validate your quantized model against a golden dataset of your most critical prompts.

How does RAG help with cost?

RAG reduces the number of tokens sent to the model by replacing a massive document with a few highly relevant snippets. Since most LLM providers and self-hosted setups charge or scale by token count, reducing the prompt size directly lowers the computational cost per request.

What is the difference between continuous batching and static batching?

Static batching waits for a group of requests to finish before starting a new group. If one request is very long, it holds up all the others. Continuous batching processes requests as they finish and inserts new ones immediately, dramatically increasing GPU utilization and overall throughput.

Is model distillation worth the effort?

Yes, if you have a very specific task and high volume. While it takes 6-12 weeks to implement, a distilled student model can be significantly smaller and faster than a quantized general-purpose model while maintaining similar accuracy for that specific task.

Next Steps for Implementation

If you're just starting, follow this path based on your role:

- For the ML Engineer: Deploy vLLM first. Enable continuous batching and implement KV caching. This gives you an immediate performance boost without touching the model weights.

- For the Infrastructure Lead: Look into FP8 quantization. Check if your current GPU fleet (like A100s or H100s) supports these formats to maximize VRAM efficiency.

- For the Product Owner: Evaluate if you can use Model Cascading. Determine which prompts are "simple" and can be handled by a 7B model, and which truly require the "big gun" model.

Pamela Watson

April 14, 2026 AT 12:49Everyone knows FP8 is the way to go! 😊 Just do it and save your money! 💸

Michael Thomas

April 15, 2026 AT 01:26American GPUs dominate this space for a reason. Stick to H100s. Best hardware in the world.

michael T

April 15, 2026 AT 21:56This whole memory wall thing is a total nightmare. I've spent countless sleepless nights wrestling with VRAM and it's just a soul-crushing void of despair. It's honestly heartbreaking how much money we bleed just to get a bot to speak coherently. I'm just drowning in these cloud bills and it feels like the industry is just eating us alive one token at a time.

Karl Fisher

April 16, 2026 AT 16:06While the guide is quaint, those of us operating at a truly elite architectural level usually implement custom kernels long before touching vLLM. It's simply a matter of taste and intellectual rigor. But for the masses, I suppose this is a charming little starting point. Truly delightful to see the common folk attempting to optimize!

Abert Canada

April 17, 2026 AT 18:24vLLM is basically the gold standard now. Get on board or get left behind. It's honestly embarrassing how some people are still using static batching in 2024.

Xavier Lévesque

April 18, 2026 AT 08:31Oh wow, a guide on how to save money. How absolutely revolutionary. I'm sure we're all just breathless with anticipation to spend 12 weeks on distillation just to save a few bucks. Truly a masterclass in efficiency.

Thabo mangena

April 20, 2026 AT 03:55It is most commendable to see such a comprehensive breakdown of these technical efficiencies. One must appreciate the meticulous nature of the trade-offs presented here. It provides an exceptionally optimistic outlook for the scalability of open-source intelligence across our diverse global infrastructures. I believe this approach will greatly benefit many developers seeking a sustainable path forward in their computational endeavors.